💡 Personal Projects

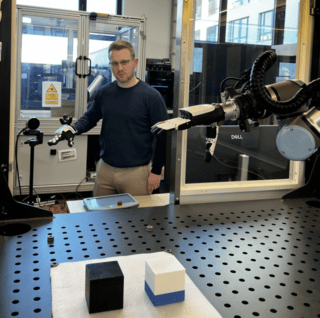

I built a complete teleoperation setup to control a robot hand and arm, using this to collect demonstrations and train an adapted 3D Diffusion Policy. My focus was on specific industrial scenarios involving the handling of multiple identical parts. This is normally a nightmare for visuomotor policies because the model cannot distinguish between identical objects based solely on the global features of the vision encoder. Current solutions relying on textual conditioning are insufficient for the industrial context.In addition to conducting experiments on the behaviour of the policy with unknown objects and areas, I also found a method to train the model to indicate when it has finished the task so it can reliably switch back to a classical motion planner.

Features :

- Developed a teleoperation and imitation learning pipeline using 3D camera and data glove in ROS2.

- Trained Diffusion Policies to enable robust grasping capabilities in multi-object scenes.

- Created a full MoveIt model for safe and reliable motion execution planning.

- Applied the resulting policies to industrial automation scenarios.

Tech Stack :

Traffic Sign Recognition with YOLOv8

Developed as part of a university project at Heilbronn University, this real-time traffic sign recognition system uses YOLOv8n for fast and robust detection. It handles challenging conditions like occlusion, poor lighting, and complex backgrounds by leveraging a custom synthetic dataset, multi-stage classification, and real-time frame filtering.

Features :

- Real-time traffic sign detection using YOLOv8n (Nano version for speed and efficiency).

- Custom synthetic dataset generation with COCO backgrounds and heavy augmentation.

- Two-stage classification specifically for speed limit signs.

- Frame caching logic to reduce false positives during inference.

- Visualization via UI overlay: persistent speed sign display + rotating multi-sign view.

- Trained on 3000+ synthetic images and validated with GTSDB and dashcam footage.

- Fast inference: ~0.06–0.09 seconds/frame.

Tech Stack :

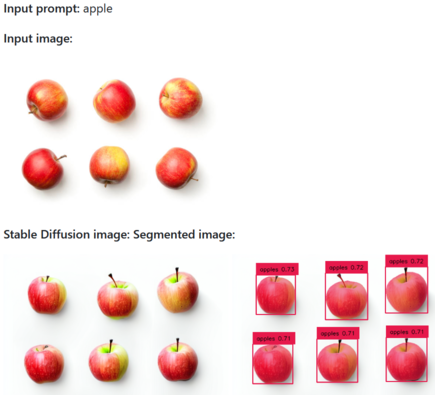

Stabled Grounding SAM

Stabled Grounding SAM is a powerful tool for generating synthetic datasets with pre-segmented images. It combines Stable Diffusion, Grounding DINO, and Segment Anything to create annotated datasets from just a single input image and a label file.

Features :

- Generates synthetic images from a single input image using Stable Diffusion's `img2img`.

- Automatically detects and labels objects using Grounding DINO.

- Refines segmentations using Meta’s Segment Anything model.

- Outputs datasets in YOLO format for easy training integration.

- Great for quickly building vision datasets without manual labeling.